Spotlight: AI chat, games like Retro, location changer, Roblox unblocked

Spotlight: AI chat, games like Retro, location changer, Roblox unblocked

AI art generators have received much attention this year, whether for their spectacular achievements or questionable applications. One of the biggest players in this sector is OpenAI's Dall-E. It is now available to the general public and developers and will soon be integrated into Microsoft software and the Bing search engine.

Shutterstock will also integrate the tool and pay artists copies to give back and hopefully avoid ethical difficulties. After all, Shutterstock imagery was utilized for training the Dall-E AI. But how precisely do you collaborate with Dall-E? Is it as simple as entering a description called a prompt and receiving a picture? To be honest, we believe so. But there's a lot more to consider if you want to come close to achieving perfection. Let us discuss it through this comprehensive guide on how to use DALL-E.

Table of contents

DALL-E is an image generator that utilizes deep learning techniques and artificial intelligence (AI) to convert textual descriptions into corresponding visual images. Developed by OpenAI, DALL-E demonstrates the power of generative models in transforming abstract concepts and ideas into tangible visual representations. DALL-E's image generation process involves a complex network of neural networks and algorithms that learn to associate specific words and phrases with corresponding visual features. Through training, DALL-E has acquired the ability to generate various images, including everyday objects, animals, scenes, and even abstract concepts that might not have been directly present in the training data.

As an image generator, DALL-E provides users a powerful tool to explore and express their creativity. By translating textual descriptions into vivid visual outputs, it opens up new possibilities for artists, designers, and creators to visualize their ideas, experiment with different concepts, and generate visually stunning compositions.

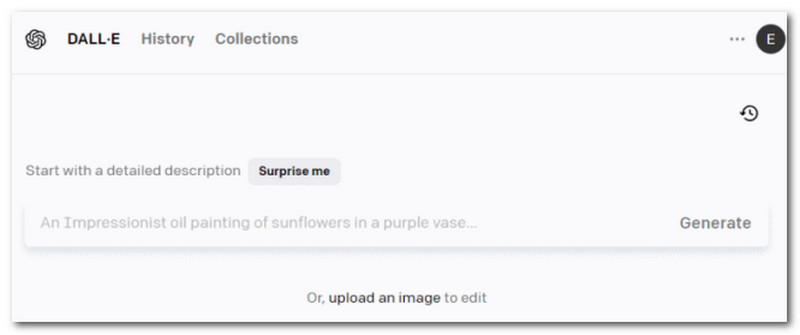

In this portion, we will give you step-by-step guidelines on how to use the amazing DALL-E AI Art Generator. Yet, before digging deep, it is important to use Download DALL-E on your computer. Another option is to access DALL-E Online on your web browser. Then after that, we can now proceed with the following steps.

Create a DALL-E Account

The first step is registering at labs.openai.com. That will be possible if we open in a new window. Create a DALL-E Login with an Email Address and a Strong Password, or use a Google or Microsoft Account. There is no option for multi-factor authentication.

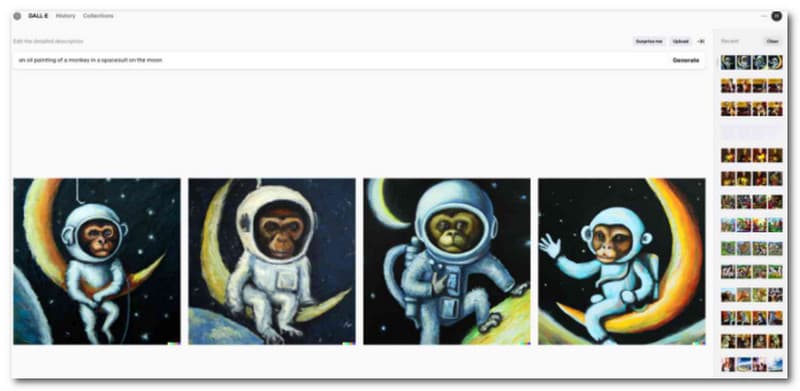

Prompting Images

After signing up, you'll be presented with a form for your Prompt. When you click the Surprise Me button, additional random prompts will be put into the text box; these will not count against your credits until you click Generate. You can also upload your image and utilize Dall-E to edit it to add new AI-generated information or to create wall-e-surprise-me whole new variants of the original.

Image Variant

For any image you create in Dall-E or any image you upload to Dall-E, it is sure you own the copyright. Then from there, you can have an instant variant. Uploaded photos must be cropped to a square 1:1 ratio image.

Edit: Erase DALL-E Image

Assume you've made an image with Dall-E that you like. Mostly. But something isn't quite right. Select Edit and use the Eraser tool to eliminate the part you don't like, then rewrite part of the prompt to address that section.

Edit: Enlarge DALL-E Image

Another option under Edit is to construct Generation Frames. Click the Add Generation Frame symbol in the upper left, which looks like a box with a plus sign, and you'll have a floating box that you may position wherever beyond the image's boundary.

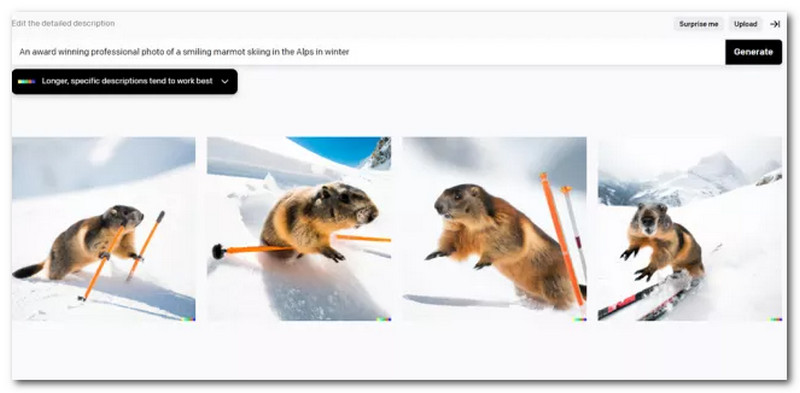

How do you write an effective prompt for DALLE when you get what you put into it? In short, assume your photograph already exists in some kind of internet gallery, and then write the type of short text you might see showing alongside it.

Make it specific

If you enter a single word, such as runner, you could get everything from a photo of an elite athlete finishing a marathon to a lovely pencil sketch of a toddler running through a meadow or, as seen in the example above, even a made-up creature! Instead of just one word, employ a phrase to express your desire.

Directive Details

Rather than just mentioning oil painting, you could say oil-on-canvas, Caravaggio masterpiece from 1599, or HD photograph, Canon camera, studio lighting, large-format portrait on Kodak ColorPlus 200 film. Incorporating these different characteristics into your image prompts AI technology to determine the type of image you intend, even if it does not always get it exactly right.

Avoid Blunders

Because AI generation has inherent limitations, some picture prompts are less likely to have the desired impact.

DALL-E and its successor, DALL-E 2, are groundbreaking generative models developed by OpenAI that have revolutionized the field of artificial intelligence and creativity. These models utilize deep learning techniques to generate images from textual descriptions, enabling AI to exhibit great artistic capabilities. This comprehensive review will delve into the advancements and improvements offered by DALL-E 2 compared to its predecessor.

One of the notable enhancements in DALL-E 2 is its improved image quality and resolution. While DALL-E could already generate impressive visuals, DALL-E 2 takes it further, producing more detailed and realistic images. The higher resolution allows for finer textures, sharper edges, and overall visual fidelity. The output images from DALL-E 2 showcase a noticeable visual appeal and clarity improvement.

DALL-E 2 introduces several key features that give users greater control and flexibility over the generated images. The model allows users to influence the image generation process through interactive prompts, where specific edits can be made to guide the output in desired directions. This level of control enables users to fine-tune and iterate on their creative vision, resulting in more personalized and tailored results.

DALL-E 2 significantly improves its understanding of complex textual descriptions, offering a broader vocabulary and a deeper grasp of concepts. This expanded knowledge base allows the model to interpret nuanced instructions better, resulting in more accurate and contextually appropriate image generation. Users can now describe complex scenes, abstract concepts, and intricate visual details, and DALL-E 2 will produce images that align with their intended meaning more effectively.

| DAL-E | DAL-E 2 | |

| Price | $2 | $15. |

| Release Date | January 05, 2021 | September 22, 2022 |

| Resolutions | 2024 x 1024 Pixel, 512 x 512 Pixel and 256 x 256 | 2024 x 1024 Pixel, 512 x 512 Pixel and 256 x 256 |

| Standard | Bug Protection | Standard less faulty. |

| Quality | ||

| Credibility | ||

| Creativity |

Quality:9.0

Flexibility:9.0

Vocabulary:8.5

Quality:9.5

Flexibility:9.0

Vocabulary:8.5

Dall-E is not completely free. The service is based on Credits (Opens in a new window). You receive 50 free credits upon signup and 15 free credits each month after that, but they do not roll over. Paid credits roll over monthly for up to 12 months; get 115 credits for $2 to $15. One credit allows you to perform one AI art generation (four new images per normal generation). That may start with a prompt, but it could also be a credit for making a version of already generated work. You can waste a lot of credits attempting to find the correct AI-generated image.

How can we input a textual description to generate images with DALL-E?

You must provide a textual prompt or description to use DALL-E's image generator. Simply type in your desired description or specify the concept, attributes, or scene you want the generated image to depict. DALL-E will then interpret your input and generate an image based on that description.

Can we control the output of DALL-E to match our preferences?

Yes, DALL-E offers a certain level of control over the generated images. You can experiment with different prompts, modify specific details or attributes within the prompt, or provide additional instructions to guide the image generation process. This allows you to fine-tune the output and align it more closely with your creative vision

Is DALL-E 2 free to use?

DALL-E 2 finally finished its waiting list and opened the platform to the public in September 2022. Users begin with 50 free credits to convert searches into completely developed artwork, followed by 15 free credits each month. You can also buy more credits on the website.

What are the limitations or constraints when using DALL-E?

While DALL-E is an impressive tool, it does have some limitations. DALL-E may not always produce the exact image you have in mind, as the model's interpretation can be subjective. Next, the output of DALL-E is influenced by the training data it was exposed to, which means it may not generate completely novel or original concepts. Also, generating images with highly specific or rare attributes may be challenging, as the model's training data might not encompass all possible variations.

Are there any ethical considerations when using DALL-E's image generator?

As with any AI tool, there are ethical considerations when using DALL-E's image generator. Ensuring that the generated images align with societal norms and ethical guidelines is important. OpenAI has implemented content filtering mechanisms to mitigate risks and prevent misuse. Users should responsibly use DALL-E to avoid generating harmful or inappropriate content and adhere to OpenAI's terms of service and usage guidelines.

Conclusion

With DALL-E, users can provide textual prompts and descriptions to generate high-quality images that align with their creative vision. By experimenting with different prompts, leveraging interactive controls, and refining instructions, users can exercise greater control over the output and tailor it to their preferences. Evidently, with this guide, we got to know more about it. Therefore, let us now share it with your friends that need it.

Did you find this helpful?

366 Votes